Visual Sonar

Mobile robot navigation using visual sonar via omnidirectional vision system

Mobile robot navigation using visual sonar via omnidirectional vision system

The Visual Sonar project represents a breakthrough in affordable mobile robot navigation, transforming visual information from omnidirectional cameras into sonar-like depth perception. By mimicking natural sonar systems found in bats and dolphins, this innovative approach enables autonomous navigation without expensive laser scanners or complex sensor arrays.

This research demonstrates that sophisticated navigation and mapping capabilities can be achieved using minimal hardware - a single omnidirectional camera - while maintaining performance comparable to expensive RGB-D cameras and laser-based systems. The solution makes advanced robotics more accessible and economically viable across various applications.

At the heart of this research is a novel sonar vision algorithm that processes omnidirectional images without requiring any prior calibration. The system autonomously detects both static and dynamic obstacles in unknown environments, providing real-time navigation capabilities that rival traditional sensor-based approaches.

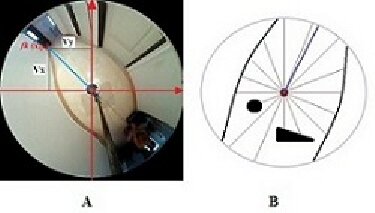

Visual Sonar algorithm concept: transforming omnidirectional visual data into sonar-like distance measurements

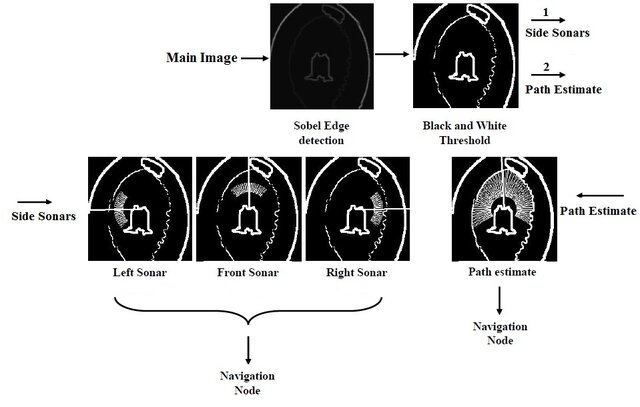

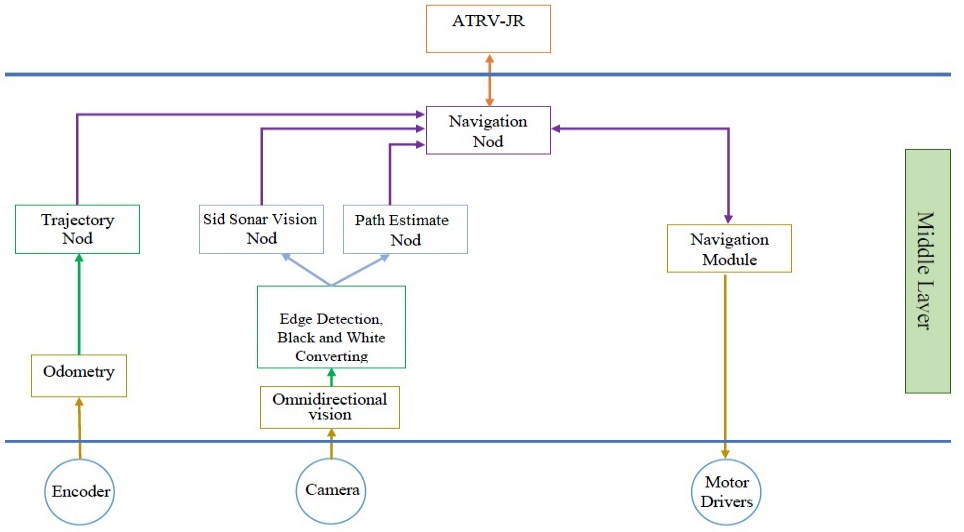

The Visual Sonar system employs a sophisticated multi-layer architecture designed for robust real-time processing. The system transforms raw visual data through several specialized layers, each optimized for specific tasks while maintaining parallel processing capabilities.

Multi-layer image processing architecture enabling real-time visual sonar transformation

Raw omnidirectional image acquisition from 360-degree camera systems

Image enhancement, noise reduction, and light reflection removal

Identification of visual landmarks and obstacle boundaries

Transformation of visual information into sonar-like distance measurements

Side Sonar Vision with independent zone analysis

Path planning and navigation command generation

Real-time control signals for robot movement and mapping

The navigation system integrates multiple specialized nodes working simultaneously to ensure robust robot control. Each node handles specific aspects of navigation while contributing to the overall decision-making process.

Comprehensive robot control architecture showing node integration and data flow

Calculates environmental data and produces velocity commands based on sonar vision analysis. Uses adaptive speed control where closer obstacles result in reduced forward speed and increased turning precision.

Monitors three strategic zones (front, right, left) independently, providing comprehensive environmental awareness with angle and length data for each zone.

Receives odometry information from motor encoders and generates predetermined paths. Creates baseline navigation plans when no obstacles are detected.

Central decision-making unit that intelligently switches between trajectory following and obstacle avoidance based on real-time environmental analysis.

A key breakthrough in this research is the Side Sonar Vision (SSV) system, which divides the robot's environment into three strategic monitoring zones. Each zone operates with independent intelligent agents that analyze data separately, enabling sophisticated multi-directional awareness.

Primary navigation zone for forward movement and obstacle detection

Right-side monitoring for corridor navigation and wall following

Left-side monitoring for comprehensive environmental awareness

The SSV system enables adaptive control where larger angle and length parameters maintain greater distances from obstacles, while smaller parameters allow closer navigation. This flexibility ensures both safety and efficiency across various operational scenarios.

Extensive research has been conducted on optimizing omnidirectional vision through advanced mirror configurations. Four different mirror types were designed and evaluated to determine optimal performance characteristics.

Compact design with variable pixel density distribution

Optimal performance configuration with consistent pixel density

Extended field of view with variable density mapping

Alternative configuration for specific application requirements

Research findings demonstrate that small uniform pixel density hyperbolic mirrors provide the best performance for vision-based mobile robot navigation, offering optimal balance between image quality and processing efficiency.

Advanced image processing techniques identify and remove unwanted light reflections from mirror surfaces, ensuring cleaner visual data for sonar algorithms. This preprocessing step is essential for maintaining navigation reliability across varying lighting conditions.

Advanced light reflection removal process for enhanced image quality

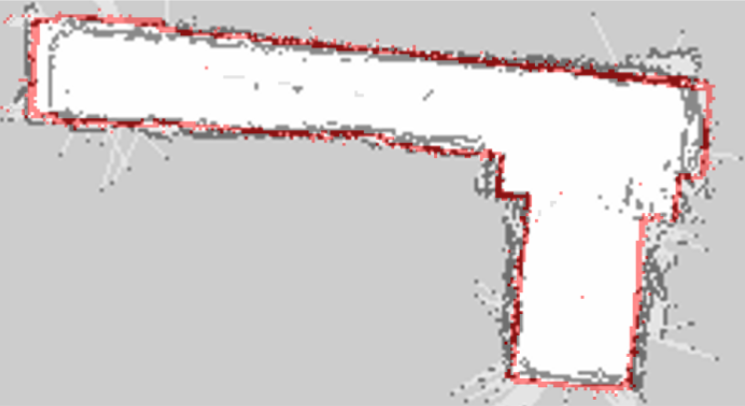

Beyond navigation, the research extends to comprehensive mapping capabilities using only a single omnidirectional camera. By combining visual sonar data with robot odometry, the system generates accurate maps suitable for robot navigation at a fraction of traditional mapping system costs.

Mapping results comparison: Visual Sonar output vs. laser-based sensors (highlighted in red)

Omnidirectional camera with 360° field of view, no calibration required

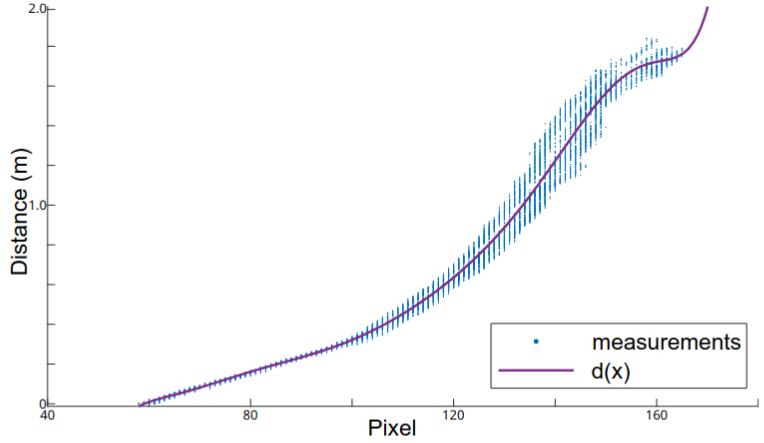

Real-time processing in approximately 120 milliseconds

Up to 98% path tracking accuracy with collision-free navigation

Compatible with various mobile robotic platforms and sensor configurations

ATRV Jr mobile robot platform used in experimental validation

Calibration and polynomial odometry fitting process for enhanced accuracy

Watch the Visual Sonar system in action, demonstrating real-time navigation capabilities:

Access the complete source code, documentation, and implementation details for the Visual Sonar project. The repository includes ROS packages, algorithms, and experimental configurations used in this research.

Repository Features: Complete ROS implementation, Visual Sonar algorithms, Side Sonar Vision (SSV) components, experimental datasets, and configuration files for omnidirectional camera systems.